A 16-year-old high school football player in Baltimore says he was surrounded by armed police after his bag of Doritos triggered his school’s new artificial intelligence security system — a glitch that could have ended tragically.

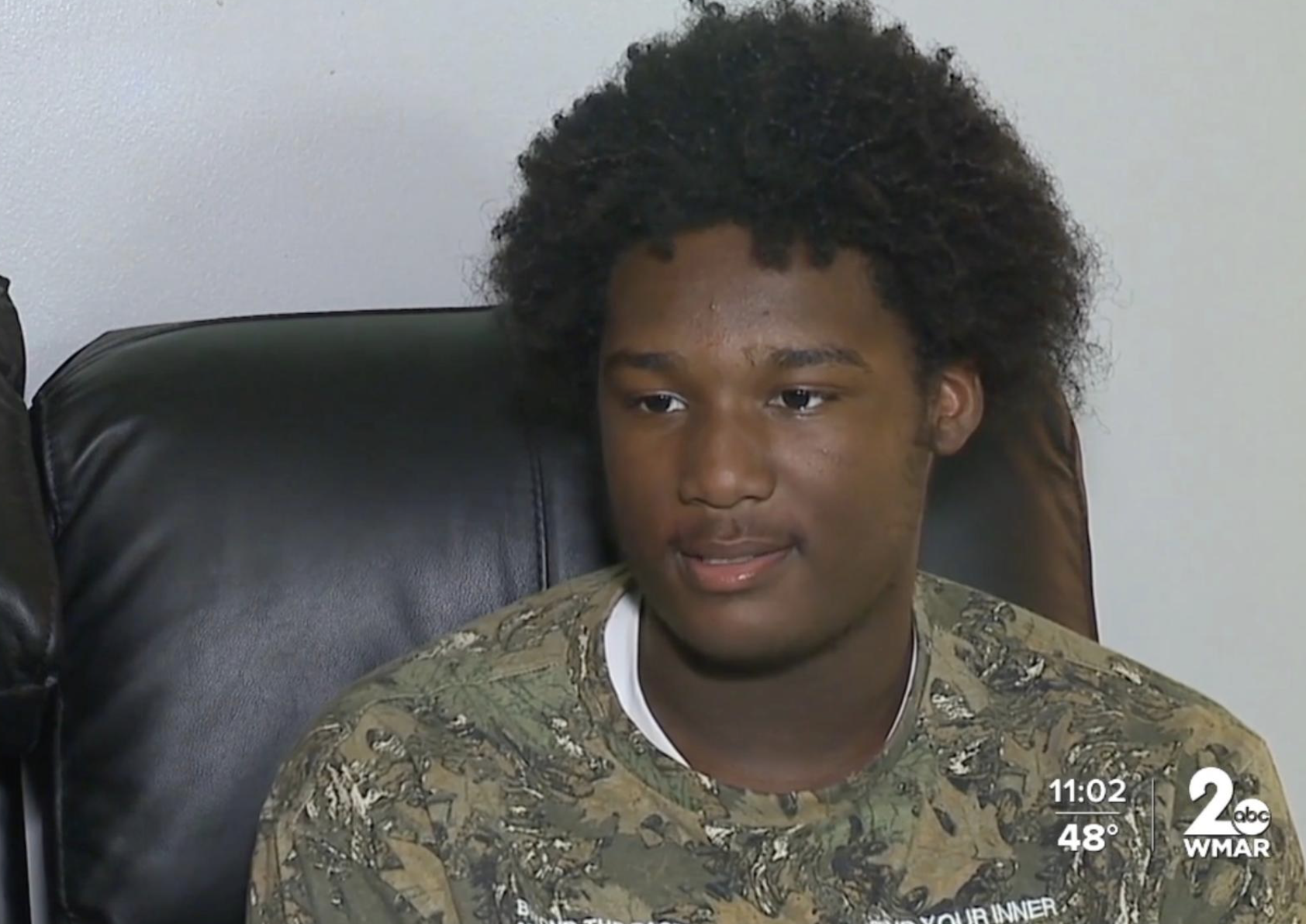

Taki Allen, a sophomore at Kenwood High School, told reporters he had just wrapped up football practice on October 20 and was chatting with teammates while finishing his chips. Seconds later, he says, the peaceful afternoon turned into chaos.

“Out of nowhere, eight cop cars pull up,” Allen recalled. “They all jump out with guns pointed at me, yelling to get on the ground. I was terrified — I didn’t even know what was happening.”

According to police, the school’s AI-powered surveillance system, made by Omnilert, had flagged a possible firearm on campus after mistaking the shiny crumpled Doritos bag for a weapon. The alert prompted an immediate law enforcement response.

Body camera footage obtained by CBS shows officers shouting commands at the teen as he raises his hands, visibly shaking. “Do you have a gun on you?” one officer asks. “What? No,” Allen replies, confused and frightened.

Minutes later, officers reviewed the flagged footage and discovered the “gun” was nothing more than a snack wrapper tossed in a trash can. One officer can be heard sighing, “I guess just the way you were eating chips … it picked it up as a gun.” Another mutters, “AI is not the best.”

“I thought I was going to die over a bag of chips,” Allen said. “It’s crazy.”

‘The Program Did What It Was Supposed to Do’

Baltimore County Schools Superintendent Myriam Rogers defended the AI system during a Thursday press conference, saying Omnilert’s software “performed as designed” and that police were following established safety protocols.

“In this case, the program did what it was supposed to do,” Rogers said. “We’d rather have a false alarm than a tragedy.”

The company behind the software, Omnilert, touts its AI gun detection technology as capable of identifying weapons within seconds. It is currently being used in hundreds of schools and public buildings across the U.S., part of a growing nationwide push to use artificial intelligence to prevent mass shootings.

But critics say the technology is still dangerously unreliable.

“AI misidentification isn’t just embarrassing — it’s potentially deadly,” said Dr. Marcus Reid, a technology ethicist at Johns Hopkins University. “When law enforcement acts on flawed data, the risk to human life skyrockets.”

Teen Now Afraid to Go Outside

Allen says he’s been deeply shaken by the experience. “I don’t feel safe waiting outside anymore,” he said. “Now I just stay inside until my ride comes. I don’t want the cameras to mistake me for something again.”

Parents and community members have begun demanding an independent review of the district’s use of AI surveillance. “This could have gone very wrong,” said one parent at a Friday school board meeting. “What happens next time, if a kid makes the wrong move?”

For now, Allen says he’s just grateful to be alive — and that next time, he’ll think twice before opening a snack near one of the cameras. “I can’t believe a bag of chips almost got me killed,” he said quietly.

Discover more from Next Gen News

Subscribe to get the latest posts sent to your email.

This is why I hate AI! If that child moved away or moved his hand differently, he could have been killed! What about the next school? Its unreliable and dangerous! Look how Trump uses it to make up fake stuff about others. Anyone can make up all kinds of bad stuff, frame someone etc using AI.

Wow. I’m surprised no one called the police racist